In TheContentMine, funded by the Shuttleworth Foundation, we aim to extract 100 million facts from the scientific literature. That’s a large number so here’s our thinking….

What is a fact?

Common Mist Frog (Litoria rheocola) of northern Australia. Credit: Damon Ramsey / Wikimedia / CC BY-SA

“Fact” means slightly different things to philosophers, lawyers, scientists and advertisers. Wikipedia describes facts in science,

a fact is a repeatable careful observation or measurement (by experimentation or other means), also called empirical evidence. Facts are central to building scientific theories.

and

a scientific fact is an objective and verifiable observation, in contrast with a hypothesis or theory, which is intended to explain or interpret facts.

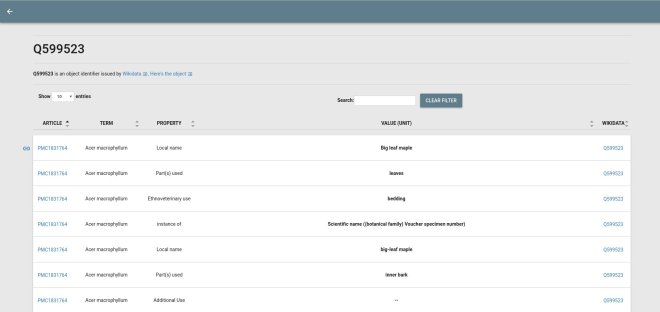

In ContentMine we highlight the “objective”, i.e. people will agree that they are talking about the same things in the same way. We concentrate on facts reported in scientific papers and my colleague Ross Mounce showed (Daily updates on IUCN Red List species) … some excellent examples about a mistfrog[1]Here are some examples he quoted:

- …Litoria rheocola is a small treefrog (average male body size: 2.0 g, 31 mm; average female body size: 3.1 g, 36 mm [20])

- the common mistfrog (Litoria rheocola), an IUCN Endangered species [22] that occurs near rocky, fast-flowing rainforest streams in northeastern Queensland, Australia [23]…

- we tracked frogs using harmonic direction finding [32,33].

- individuals move along and at right angles to the stream

- Fig 3. Distribution of estimated body temperatures of common mistfrogs (Litoria rheocola) within categories relevant to Batrachochytrium dendrobatidis growth in culture (<15°C, 15–25°C, >25°C). [Figure]

All of the above , including the components of the graph, are FACTS. They have the features:

- they are objective. They may or may not be “true” – another author might dispute the sizes of the frogs or where they live – but the authors have stated them as facts.

- they can be represented in formal representations without losing meaning or precision. There are normally very few different ways of representing such facts. “Alauda arvensis sing on the wing” is a fact. “Hail to thee blithe spirit, bird thou never wert” is not a fact.

- they are uncopyrightable. We content that all the facts we extract are uncopyrightable statements and therefore release them as CC0.

How do we represent facts? Generally they are a mixture of simple natural language statements and formal specifications. “A brown frog that lives in Queensland” is adequate; “L. rheocola. colour: brown; habitat: Queensland” says the same, slightly more formally. Formal language is useful for us as it’s easier to extract. The form:

object: name; property1: value1 ; property2: value2

is very common and very useful. Often it’s put in a table, graph or diagram. Transforming between these is one of the strengths of ContentMine software. The box plots could be put in words: “In winter in Windin Creek between 0 and 12% of the frogs had body temperatures below 15 Celsius”, but the plot may be more useful to some scientists (note the redundancy!).

So the scientific observations – temperatures, locations, dates – are all Facts. The sentence contains 6 factual concepts: winter, Windin Creek, 0%, 12%, L. rheocola, body temperature, < 15 C. In ContentMine we refer to all of these as “Facts”. Perhaps more formally we might say:

section-i/para-j/sentence-k in DOIjournal.pone.0127851 contains Windin Creek

section-i/para-j/sentence-k in DOIjournal.pone.0127851 contains L. rheocola

Those who like RDF (we sometimes use it) may regard these as triples (document-component contains entity). In similar manner the linked data as in Wikidata should be regarded as Facts (which is why we are working with Wikidata to export extracted facts there).

How many facts does a scientific paper contain?

Every entity in a document is a Fact. Each author, each species, each temperature, date, colour. A graph may have 100 facts, or 1000. Perhaps 100 facts / page? A 10-page paper might have have 1000 facts. Some chemistry papers have 300 pages of supporting information. So if we read 1 million papers we might get a billion facts – our estimate of 100 million is not hyperbole.

[1] reported in PloSONE (Roznik EA, Alford RA (2015) Seasonal Ecology and Behavior of an Endangered Rainforest Frog (Litoria rheocola) Threatened by Disease. PLoS ONE 10(5): e0127851. doi:10.1371/journal.pone.0127851).